Turn your code into any language with our Code Converter. It's the ultimate tool for multi-language programming. Start converting now!

Speech synthesis (or Text to Speech) is the computer-generated simulation of human speech. It converts human language text into human-like speech audio. In this tutorial, you will learn how to convert text to speech in Python.

Please note that I will use text-to-speech or speech synthesis interchangeably in this tutorial, as they're essentially the same thing.

In this tutorial, we won't be building neural networks and training the model from scratch to achieve results, as it is pretty complex and hard to do for regular developers. Instead, we will use some APIs, engines, and pre-trained models that offer it.

More specifically, we will use four different techniques to do text-to-speech:

- gTTS: There are a lot of APIs out there that offer speech synthesis; one of the commonly used services is Google Text to Speech; we will play around with the gTTS library.

pyttsx3: A library that looks for pre-installed speech synthesis engines on your operating system and, therefore, performs text-to-speech without needing an Internet connection.openai: We'll be using the OpenAI Text to Speech API.- Huggingface Transformers: The famous transformer library that offers a wide range of pre-trained deep learning (transformer) models that are ready to use. We'll be using a model called SpeechT5 that does this.

To clarify, this tutorial is about converting text to speech and not vice versa. If you want to convert speech to text instead, check this tutorial.

Table of contents:

- Online Text to Speech

- Offline Text to Speech

- Speech Synthesis using OpenAI API

- Speech Synthesis using 🤗 Transformers

To get started, let's install the required modules:

$ pip install gTTS pyttsx3 playsound soundfile transformers datasets sentencepiece openaiOnline Text to Speech

As you may guess, gTTS stands for Google Text To Speech; it is a Python library that interfaces with Google Translate's text-to-speech API. It requires an Internet connection, and it's pretty easy to use.

Open up a new Python file and import:

import gtts

from playsound import playsoundIt's pretty straightforward to use this library; you just need to pass text to the gTTS object, which is an interface to Google Translate's Text to Speech API:

# make request to google to get synthesis

tts = gtts.gTTS("Hello world")Up to this point, we have sent the text and retrieved the actual audio speech from the API. Let's save this audio to a file:

# save the audio file

tts.save("hello.mp3")Awesome, you'll see a new file appear in the current directory; let's play it using playsound module installed previously:

# play the audio file

playsound("hello.mp3")And that's it! You'll hear a robot talking about what you just told him to say!

It isn't available only in English; you can use other languages as well by passing the lang parameter:

# in spanish

tts = gtts.gTTS("Hola Mundo", lang="es")

tts.save("hola.mp3")

playsound("hola.mp3")If you don't want to save it to a file and just play it directly, then you should use tts.write_to_fp() which accepts io.BytesIO() object to write into; check this link for more information.

To get the list of available languages, use this:

# all available languages along with their IETF tag

print(gtts.lang.tts_langs())Here are the supported languages:

{'af': 'Afrikaans', 'sq': 'Albanian', 'ar': 'Arabic', 'hy': 'Armenian', 'bn': 'Bengali', 'bs': 'Bosnian', 'ca': 'Catalan', 'hr': 'Croatian', 'cs': 'Czech', 'da': 'Danish', 'nl': 'Dutch', 'en': 'English', 'eo': 'Esperanto', 'et': 'Estonian', 'tl': 'Filipino', 'fi': 'Finnish', 'fr': 'French', 'de': 'German', 'el': 'Greek', 'gu': 'Gujarati', 'hi': 'Hindi', 'hu': 'Hungarian', 'is': 'Icelandic', 'id': 'Indonesian', 'it': 'Italian', 'ja': 'Japanese', 'jw': 'Javanese', 'kn': 'Kannada', 'km': 'Khmer', 'ko': 'Korean', 'la': 'Latin', 'lv': 'Latvian', 'mk': 'Macedonian', 'ml': 'Malayalam', 'mr':

'Marathi', 'my': 'Myanmar (Burmese)', 'ne': 'Nepali', 'no': 'Norwegian', 'pl': 'Polish', 'pt': 'Portuguese', 'ro': 'Romanian', 'ru': 'Russian', 'sr': 'Serbian', 'si': 'Sinhala', 'sk': 'Slovak', 'es': 'Spanish', 'su': 'Sundanese', 'sw': 'Swahili', 'sv': 'Swedish', 'ta': 'Tamil', 'te': 'Telugu', 'th': 'Thai', 'tr': 'Turkish', 'uk': 'Ukrainian', 'ur': 'Urdu', 'vi': 'Vietnamese', 'cy': 'Welsh', 'zh-cn': 'Chinese (Mandarin/China)', 'zh-tw': 'Chinese (Mandarin/Taiwan)', 'en-us': 'English (US)', 'en-ca': 'English (Canada)', 'en-uk': 'English (UK)', 'en-gb': 'English (UK)', 'en-au': 'English (Australia)', 'en-gh': 'English (Ghana)', 'en-in': 'English (India)', 'en-ie': 'English (Ireland)', 'en-nz': 'English (New Zealand)', 'en-ng': 'English (Nigeria)', 'en-ph': 'English (Philippines)', 'en-za': 'English (South Africa)', 'en-tz': 'English (Tanzania)', 'fr-ca': 'French (Canada)', 'fr-fr': 'French (France)', 'pt-br': 'Portuguese (Brazil)', 'pt-pt': 'Portuguese (Portugal)', 'es-es': 'Spanish (Spain)', 'es-us': 'Spanish (United States)'}Offline Text to Speech

Now you know how to use Google's API, but what if you want to use text-to-speech technologies offline?

Well, pyttsx3 library comes to the rescue. It is a text-to-speech conversion library in Python, and it looks for TTS engines pre-installed in your platform and uses them, here are the text-to-speech synthesizers that this library uses:

- SAPI5 on Windows XP, Windows Vista, 8, 8.1, 10 and 11.

- NSSpeechSynthesizer on Mac OS X.

- espeak on Ubuntu Desktop Edition.

Here are the main features of the pyttsx3 library:

- It works fully offline

- You can choose among different voices that are installed on your system

- Controlling the speed of speech

- Tweaking volume

- Saving the speech audio into a file

Note: If you're on a Linux system and the voice output is not working with this library, then you should install espeak, FFmpeg, and libespeak1:

$ sudo apt update && sudo apt install espeak ffmpeg libespeak1To get started with this library, open up a new Python file and import it:

import pyttsx3Now, we need to initialize the TTS engine:

# initialize Text-to-speech engine

engine = pyttsx3.init()To convert some text, we need to use say() and runAndWait() methods:

# convert this text to speech

text = "Python is a great programming language"

engine.say(text)

# play the speech

engine.runAndWait()say() method adds an utterance to speak to the event queue, while the runAndWait() method runs the actual event loop until all commands are queued up. So you can call say() multiple times and run a single runAndWait() method in the end to hear the synthesis, try it out!

This library provides us with some properties we can tweak based on our needs. For instance, let's get the details of the speaking rate:

# get details of speaking rate

rate = engine.getProperty("rate")

print(rate)Output:

200Alright, let's change this to 300 (make the speaking rate much faster):

# setting new voice rate (faster)

engine.setProperty("rate", 300)

engine.say(text)

engine.runAndWait()Or slower:

# slower

engine.setProperty("rate", 100)

engine.say(text)

engine.runAndWait()Another useful property is voices, which allow us to get details of all voices available on your machine:

# get details of all voices available

voices = engine.getProperty("voices")

print(voices)Here is the output in my case:

[<pyttsx3.voice.Voice object at 0x000002D617F00A20>, <pyttsx3.voice.Voice object at 0x000002D617D7F898>, <pyttsx3.voice.Voice object at 0x000002D6182F8D30>]As you can see, my machine has three voice speakers. Let's use the second, for example:

# set another voice

engine.setProperty("voice", voices[1].id)

engine.say(text)

engine.runAndWait()You can also save the audio as a file using the save_to_file() method, instead of playing the sound using say() method:

# saving speech audio into a file

engine.save_to_file(text, "python.mp3")

engine.runAndWait()A new MP3 file will appear in the current directory; check it out!

Speech Synthesis using OpenAI API

In this section, we'll be using the newly released OpenAI audio models. Before we get started, make sure to update openai library to the latest version:

$ pip install --upgrade openaiNext, you must create an OpenAI account and navigate to the API key page to Create a new secret key. Make sure to save this somewhere safe and do not share it with anyone.

Next, let's open up a new Python file and initialize our OpenAI API client:

from openai import OpenAI

# initialize the OpenAI API client

api_key = "YOUR_OPENAI_API_KEY"

client = OpenAI(api_key=api_key)After that, we can simply use client.audio.speech.create() to perform text to speech:

# sample text to generate speech from

text = """In his miracle year, he published four groundbreaking papers.

These outlined the theory of the photoelectric effect, explained Brownian motion,

introduced special relativity, and demonstrated mass-energy equivalence."""

# generate speech from the text

response = client.audio.speech.create(

model="tts-1", # the model to use, there is tts-1 and tts-1-hd

voice="nova", # the voice to use, there is alloy, echo, fable, onyx, nova, and shimmer

input=text, # the text to generate speech from

speed=1.0, # the speed of the generated speech, ranging from 0.25 to 4.0

)

# save the generated speech to a file

response.stream_to_file("openai-output.mp3")This is a paid API, and at the time of writing this, there are two models: tts-1 for 0.015$ per 1,000 characters and tts-1-hd for 0.03$ per 1,000 characters. tts-1 is cheaper and faster, whereas tts-1-hd provides higher-quality audio.

There are currently 6 voices you can choose from. I've chosen nova, but you can use alloy, echo, fable, onyx, and shimmer.

You can also experiment with the speed parameter; the default is 1.0, but if you set it lower than that, it'll generate a slow speech and a faster speech when above 1.0.

There is another parameter that is response_format. The default is mp3, but you can set it to opus, aac, and flac.

Speech Synthesis using 🤗 Transformers

In this section, we will use the 🤗 Transformers library to load a pre-trained text-to-speech transformer model. More specifically, we will use the SpeechT5 model that is fine-tuned for speech synthesis on LibriTTS. You can learn more about the model in this paper.

To get started, let's install the required libraries (if you haven't already):

$ pip install soundfile transformers datasets sentencepieceOpen up a new Python file named tts_transformers.py and import the following:

from transformers import SpeechT5Processor, SpeechT5ForTextToSpeech, SpeechT5HifiGan

from datasets import load_dataset

import torch

import random

import string

import soundfile as sf

device = "cuda" if torch.cuda.is_available() else "cpu"Let's load everything:

# load the processor

processor = SpeechT5Processor.from_pretrained("microsoft/speecht5_tts")

# load the model

model = SpeechT5ForTextToSpeech.from_pretrained("microsoft/speecht5_tts").to(device)

# load the vocoder, that is the voice encoder

vocoder = SpeechT5HifiGan.from_pretrained("microsoft/speecht5_hifigan").to(device)

# we load this dataset to get the speaker embeddings

embeddings_dataset = load_dataset("Matthijs/cmu-arctic-xvectors", split="validation")The processor is the tokenizer of the input text, whereas the model is the actual model that converts text to speech.

The vocoder is the voice encoder that is used to convert human speech into electronic sounds or digital signals. It is responsible for the final production of the audio file.

In our case, the SpeechT5 model transforms the input text we provide into a sequence of mel-filterbank features (a type of representation of the sound). These features are acoustic features often used in speech and audio processing, derived from a Fourier transform of the signal.

The HiFi-GAN vocoder we're using takes these representations and synthesizes them into actual audible speech.

Finally, we load a dataset that will help us get the speaker's voice vectors to synthesize speech with various speakers. Here are the speakers:

# speaker ids from the embeddings dataset

speakers = {

'awb': 0, # Scottish male

'bdl': 1138, # US male

'clb': 2271, # US female

'jmk': 3403, # Canadian male

'ksp': 4535, # Indian male

'rms': 5667, # US male

'slt': 6799 # US female

}Next, let's make our function that does all the speech synthesis for us:

def save_text_to_speech(text, speaker=None):

# preprocess text

inputs = processor(text=text, return_tensors="pt").to(device)

if speaker is not None:

# load xvector containing speaker's voice characteristics from a dataset

speaker_embeddings = torch.tensor(embeddings_dataset[speaker]["xvector"]).unsqueeze(0).to(device)

else:

# random vector, meaning a random voice

speaker_embeddings = torch.randn((1, 512)).to(device)

# generate speech with the models

speech = model.generate_speech(inputs["input_ids"], speaker_embeddings, vocoder=vocoder)

if speaker is not None:

# if we have a speaker, we use the speaker's ID in the filename

output_filename = f"{speaker}-{'-'.join(text.split()[:6])}.mp3"

else:

# if we don't have a speaker, we use a random string in the filename

random_str = ''.join(random.sample(string.ascii_letters+string.digits, k=5))

output_filename = f"{random_str}-{'-'.join(text.split()[:6])}.mp3"

# save the generated speech to a file with 16KHz sampling rate

sf.write(output_filename, speech.cpu().numpy(), samplerate=16000)

# return the filename for reference

return output_filenameThe function takes the text, and the speaker (optional) as arguments and does the following:

- It tokenizes the input text into a sequence of token IDs.

- If the speaker is passed, then we use the speaker vector to mimic the sound of the passed speaker during synthesis.

- If it's not passed, we simply make a random vector using

torch.randn(). Although I do not think it's a reliable way of making a random voice. - Next, we use our

model.generate_speech()method to generate the speech tensor, it takes the input IDs, speaker embeddings, and the vocoder. - Finally, we make our output filename and save it with a 16Khz sampling rate. (A funny thing you can do is when you reduce the sampling rate to 12Khz or 8Khz, you'll get a deeper and slower voice, and vice-versa: a higher-pitched and faster voice when you increase it to values like 22050 or 24000)

Let's use the function now:

# generate speech with a US female voice

save_text_to_speech("Python is my favorite programming language", speaker=speakers["slt"])This will generate a speech of the US female (as it's my favorite among all the speakers). This will generate a speech with a random voice:

# generate speech with a random voice

save_text_to_speech("Python is my favorite programming language")Let's now call the function with all the speakers so you can compare speakers:

# a challenging text with all speakers

text = """In his miracle year, he published four groundbreaking papers.

These outlined the theory of the photoelectric effect, explained Brownian motion,

introduced special relativity, and demonstrated mass-energy equivalence."""

for speaker_name, speaker in speakers.items():

output_filename = save_text_to_speech(text, speaker)

print(f"Saved {output_filename}")

# random speaker

output_filename = save_text_to_speech(text)

print(f"Saved {output_filename}")Output:

Saved 0-In-his-miracle-year,-he-published.mp3

Saved 1138-In-his-miracle-year,-he-published.mp3

Saved 2271-In-his-miracle-year,-he-published.mp3

Saved 3403-In-his-miracle-year,-he-published.mp3

Saved 4535-In-his-miracle-year,-he-published.mp3

Saved 5667-In-his-miracle-year,-he-published.mp3

Saved 6799-In-his-miracle-year,-he-published.mp3

Saved lz7Rh-In-his-miracle-year,-he-published.mp3Listen to 6799-In-his-miracle-year,-he-published.mp3:

Conclusion

Great, that's it for this tutorial; I hope that will help you build your application or maybe your own virtual assistant in Python!

To conclude, we have used four different methods for text-to-speech:

- Online Text to speech using the gTTS library

- Offline Text to speech using pyttsx3 library that uses an existing engine on your OS.

- The convenient Audio OpenAI API.

- Finally, we used 🤗 Transformers to perform text-to-speech (offline) using our computing resources.

So, to wrap it up, If you want to use a reliable synthesis, you can go for Audio OpenAI API, Google TTS API, or any other reliable API you choose. If you want a reliable but offline method, you can also use the SpeechT5 transformer. And if you just want to make it work quickly and without an Internet connection, you can use the pyttsx3 library.

You can get the complete code for all the methods used in the tutorial here.

Here is the documentation for used libraries:

- gTTS (Google Text-to-Speech)

- pyttsx3 - Text-to-speech x-platform

- OpenAI Text to Speech

- SpeechT5 (TTS task)

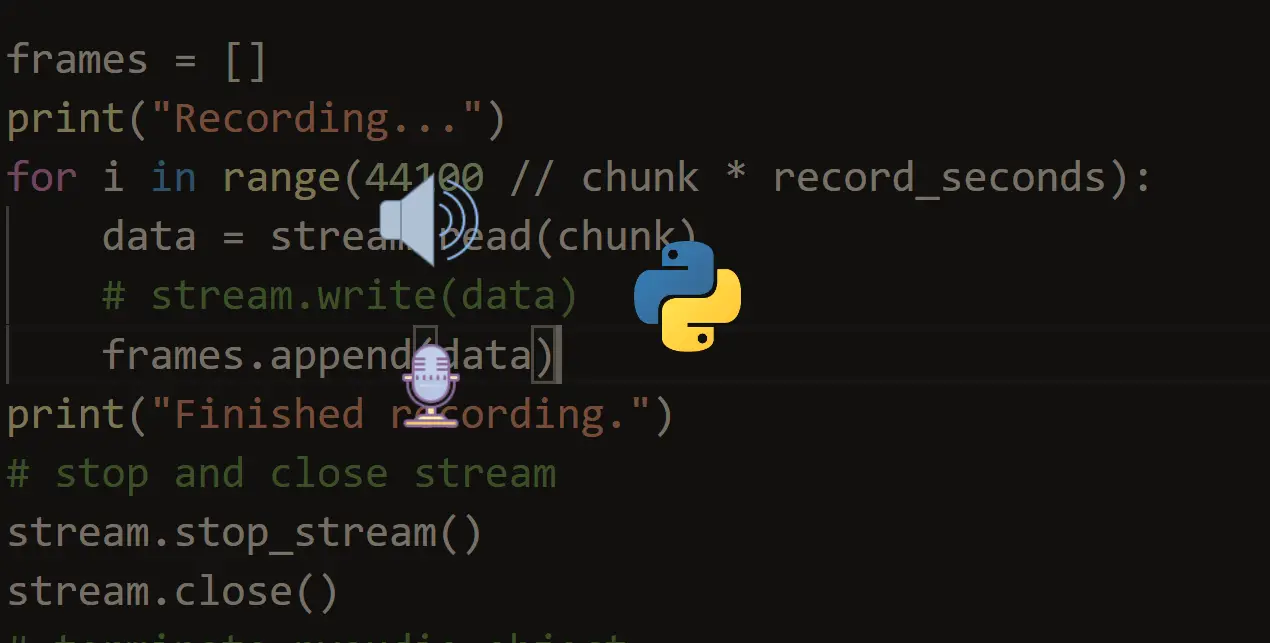

Related: How to Play and Record Audio in Python.

Happy Coding ♥

Ready for more? Dive deeper into coding with our AI-powered Code Explainer. Don't miss it!

View Full Code Auto-Generate My Code

Got a coding query or need some guidance before you comment? Check out this Python Code Assistant for expert advice and handy tips. It's like having a coding tutor right in your fingertips!