Turn your code into any language with our Code Converter. It's the ultimate tool for multi-language programming. Start converting now!

Large amounts of data can’t really be stored and managed properly unless you design the data in a specific way. This way is called big data modeling, and it is mainly achieved by implementing data models with the right potential for the complexity, diversity, and speed of data created in big data environments. These models enable businesses to actually make data-driven decisions with big data.

Table of contents:

- Challenges in Modeling Big Data

- Big Data Modeling in ClickHouse

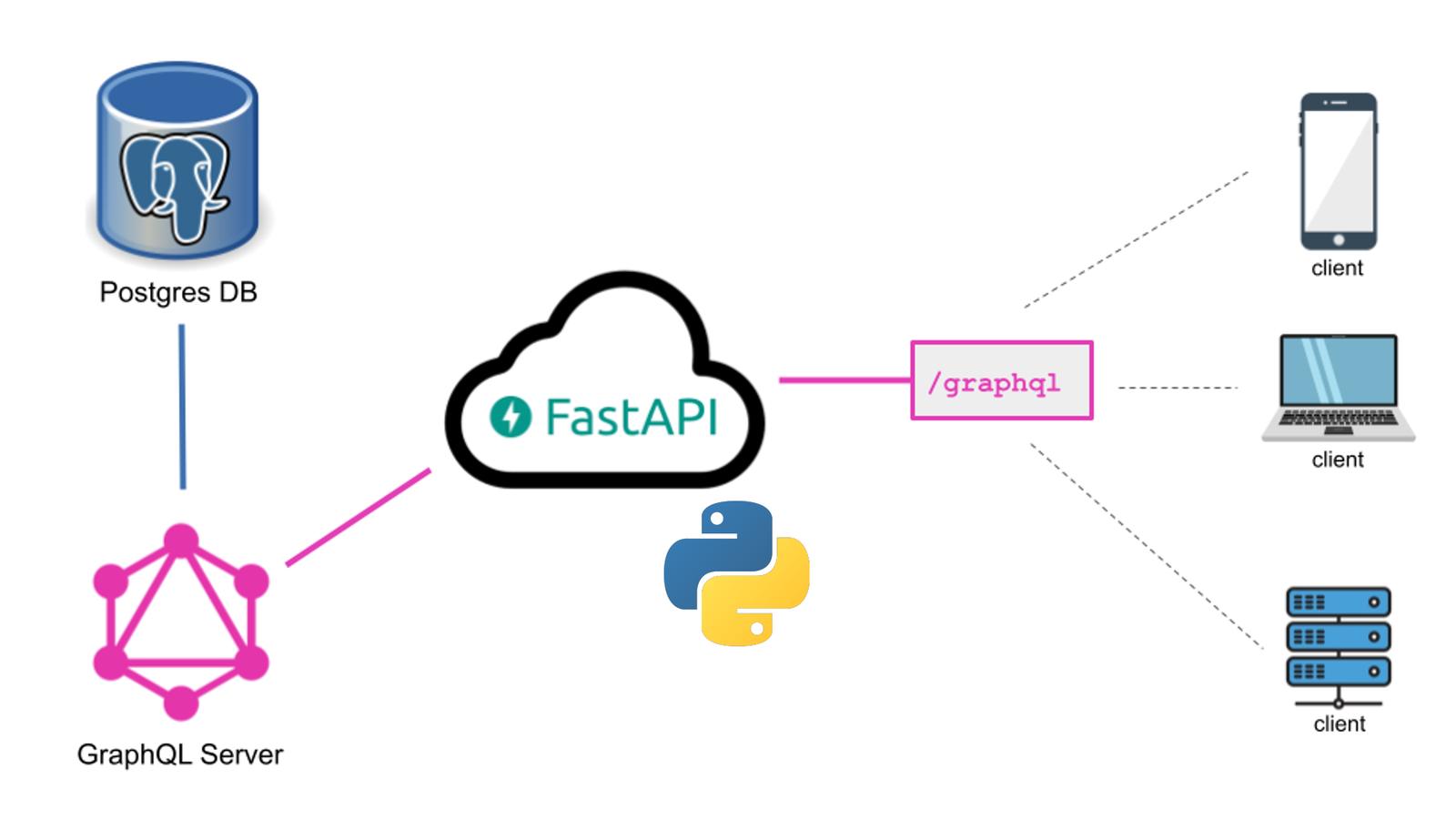

- Big Data Modeling in PostgreSQL

- Conclusion

Challenges in Modeling Big Data

Modeling big data presents several unique challenges:

- Volume. Big data is characterized by its immense volume, often reaching petabytes or more. Traditional modeling approaches may not scale effectively to handle such massive datasets.

- Variety. Big data encompasses diverse data types, including structured, semi-structured, and unstructured data. Modeling must accommodate this variety to extract valuable insights.

- Velocity. Data streams in rapidly in big data environments. Modeling should support real-time or near-real-time processing to keep up with the high data velocity.

- Complexity. Big data often involves complex relationships and data dependencies that require sophisticated modeling techniques.

- Cost. Storing and processing big data can be costly. Effective modeling should strike a balance between performance and resource utilization.

This article will explore a comparison of ClickHouse vs. PostgreSQL in terms of addressing these challenges and providing efficient solutions for big data modeling.

Big Data Modeling in ClickHouse

ClickHouse is an open-source, columnar-based, distributed database management system designed for high-performance analytics on large datasets. It is optimized for read-heavy workloads and is particularly well-suited for real-time analytical processing. ClickHouse's architecture is built to efficiently store and query vast amounts of data, making it a popular choice for big data modeling.

Data Modeling Approaches in ClickHouse

ClickHouse supports various data modeling approaches tailored for big data:

- Columnar Storage. ClickHouse stores data in a columnar format, which enhances query performance for analytical workloads. This approach allows for efficient compression and the retrieval of specific columns, minimizing I/O operations.

- Materialized Views. ClickHouse supports materialized views, which allow precomputing and caching of complex queries to accelerate data retrieval.

- Distributed Processing. ClickHouse's distributed architecture enables horizontal scaling across multiple nodes, making it capable of handling immense datasets and high query concurrency.

Advantages of ClickHouse for Big Data Modeling

ClickHouse offers several advantages for big data modeling:

- Exceptional Query Performance. Its columnar storage and distributed processing capabilities result in rapid query execution for analytical workloads.

- Scalability. ClickHouse scales horizontally, allowing organizations to add nodes as data volumes grow.

- Compression. Efficient data compression techniques minimize storage costs for large datasets.

- Real-time Processing. ClickHouse can handle real-time data streams, making it suitable for applications requiring up-to-the-minute insights.

Disadvantages of ClickHouse for Big Data Modeling

While ClickHouse is a powerful tool for big data modeling, it may not be ideal for every scenario:

- Limited Write Performance. ClickHouse is primarily designed for read-heavy workloads, so its write performance may not be as robust as other systems optimized for transactional data.

- Complex Configuration. Setting up and configuring ClickHouse for optimal performance can be complex, particularly for those new to the platform.

- Narrow Use Case. ClickHouse excels in analytics but may not be the best choice for transactional databases or applications requiring frequent updates.

Understanding ClickHouse's strengths and weaknesses is essential for organizations considering it for their big data modeling needs.

Big Data Modeling in PostgreSQL

PostgreSQL, often referred to as Postgres, is a robust open-source relational database management system (RDBMS) known for its extensibility and flexibility. Originally designed for traditional relational data, PostgreSQL has evolved to handle a wide range of data types and complex workloads, making it a candidate for big data modeling as well.

Data Modeling Approaches in PostgreSQL

PostgreSQL provides several data modeling approaches for big data scenarios:

- Table Partitioning. PostgreSQL supports table partitioning, allowing data to be divided into smaller, more manageable chunks. This enhances query performance and simplifies data maintenance. See how table partitioning works in this IBM material.

- Indexing. PostgreSQL offers various indexing techniques, including B-tree, GIN, and GiST, to optimize query execution on large datasets.

- Extensions. Extensions like PostGIS for spatial data and Hstore for semi-structured data provide additional modeling capabilities for diverse data types.

Advantages of PostgreSQL for Big Data Modeling

PostgreSQL offers numerous advantages for big data modeling:

- ACID Compliance. PostgreSQL is ACID-compliant, making it suitable for applications that require data consistency and reliability.

- Data Type Flexibility. PostgreSQL supports a wide range of data types, including structured, semi-structured, and unstructured data, making it adaptable to various data sources.

- Extensibility. PostgreSQL's extensibility allows users to define custom data types, operators, and functions to meet specific modeling requirements.

- Community and Ecosystem. PostgreSQL has a strong and active user community and a rich ecosystem of extensions and plugins for various data modeling needs.

Disadvantages of PostgreSQL for Big Data Modeling

While PostgreSQL is a versatile RDBMS, it may have limitations in some big data scenarios:

- Performance. PostgreSQL may not offer the same level of performance for analytical workloads as specialized columnar databases like ClickHouse.

- Scalability. Scaling PostgreSQL for very large datasets can be challenging, requiring careful design and potentially additional tools.

- Complexity. Handling diverse data types and complex modeling scenarios can require in-depth knowledge of PostgreSQL and may involve a steeper learning curve.

Organizations should weigh these advantages and disadvantages when considering PostgreSQL for big data modeling, particularly when dealing with large and complex datasets.

Conclusion

The choice of the specific data modeling provider depends on your needs, expertise, and infrastructure. Each case is unique, and you need to carefully assess these factors. Only then can you make a reasonable decision that actually aligns with your goals. This will ensure peak performance and precision.

Ready for more? Dive deeper into coding with our AI-powered Code Explainer. Don't miss it!

Got a coding query or need some guidance before you comment? Check out this Python Code Assistant for expert advice and handy tips. It's like having a coding tutor right in your fingertips!