Unlock the secrets of your code with our AI-powered Code Explainer. Take a look!

In computing, a thread is a sequence of programmed instructions to be executed in a program, two threads running in a single program means that they are running concurrently (i.e, and not in parallel). Unlike processes, threads in Python don't run on a separate CPU core; they share memory space and efficiently read and write to the same variables.

Threads are like mini-processes; in fact, some people call them lightweight processes; that's because threads can live inside a process and do the job of a process. But in fact, they are quite different; here are the main differences between threads and processes in Python:

Process

- A process can contain one or more threads

- Processes have separate memory spaces

- Two processes can run on different CPU cores (which leads to communication problems, but better CPU performance)

- Processes have more overhead than threads (creating and destroying processes takes more time)

- Running multiple processes is only effective for CPU-bound tasks

Thread

- Threads share the same memory space and can read and write to shared variables (with synchronization, of course)

- Two threads in a single Python program cannot execute at the same time

- Running multiple threads is only effective for I/O-bound tasks

Now, you may be wondering why we need to use threads if they can't run simultaneously. Before we answer that question, let's see why threads can't run simultaneously.

Python's GIL

The most controversial topic among Python developers, the GIL stands for Global Interpreter Lock, which is basically a lock that prevents two threads from executing simultaneously in the same Python interpreter, some people don't like that, while others claiming that it isn't a problem, as there are libraries such as Numpy that bypass this limitation by running programs in external C code.

Why do we need to use threads? Well, Python releases the lock while waiting for the I/O block to resolve, so if your Python code makes a request to an API, a database in the disk, or downloading from the internet, Python doesn't give it a chance to even acquire the lock, as these kinds of operations happen outside the GIL. In a nutshell, we only benefit from threads in the I/O bound.

Single Thread

For demonstration, the below code tries to download some files from the internet (which is a perfect I/O task) sequentially without using threads (it requires requests to be installed, just run pip3 install requests):

import requests

from time import perf_counter

# read 1024 bytes every time

buffer_size = 1024

def download(url):

# download the body of response by chunk, not immediately

response = requests.get(url, stream=True)

# get the file name

filename = url.split("/")[-1]

with open(filename, "wb") as f:

for data in response.iter_content(buffer_size):

# write data read to the file

f.write(data)

if __name__ == "__main__":

urls = [

"https://cdn.pixabay.com/photo/2018/01/14/23/12/nature-3082832__340.jpg",

"https://cdn.pixabay.com/photo/2013/10/02/23/03/dawn-190055__340.jpg",

"https://cdn.pixabay.com/photo/2016/10/21/14/50/plouzane-1758197__340.jpg",

"https://cdn.pixabay.com/photo/2016/11/29/05/45/astronomy-1867616__340.jpg",

"https://cdn.pixabay.com/photo/2014/07/28/20/39/landscape-404072__340.jpg",

] * 5

t = perf_counter()

for url in urls:

download(url)

print(f"Time took: {perf_counter() - t:.2f}s")Once you execute it, you'll notice new images appear in the current directory and you'll get something like this as output:

Time took: 13.76sSo the above code is pretty straightforward, it iterates over these images and downloads them each one by one, that took about 13.8s (will vary depending on your Internet connection) but in any way, we're wasting a lot of time here, if you need performance, consider using threads.

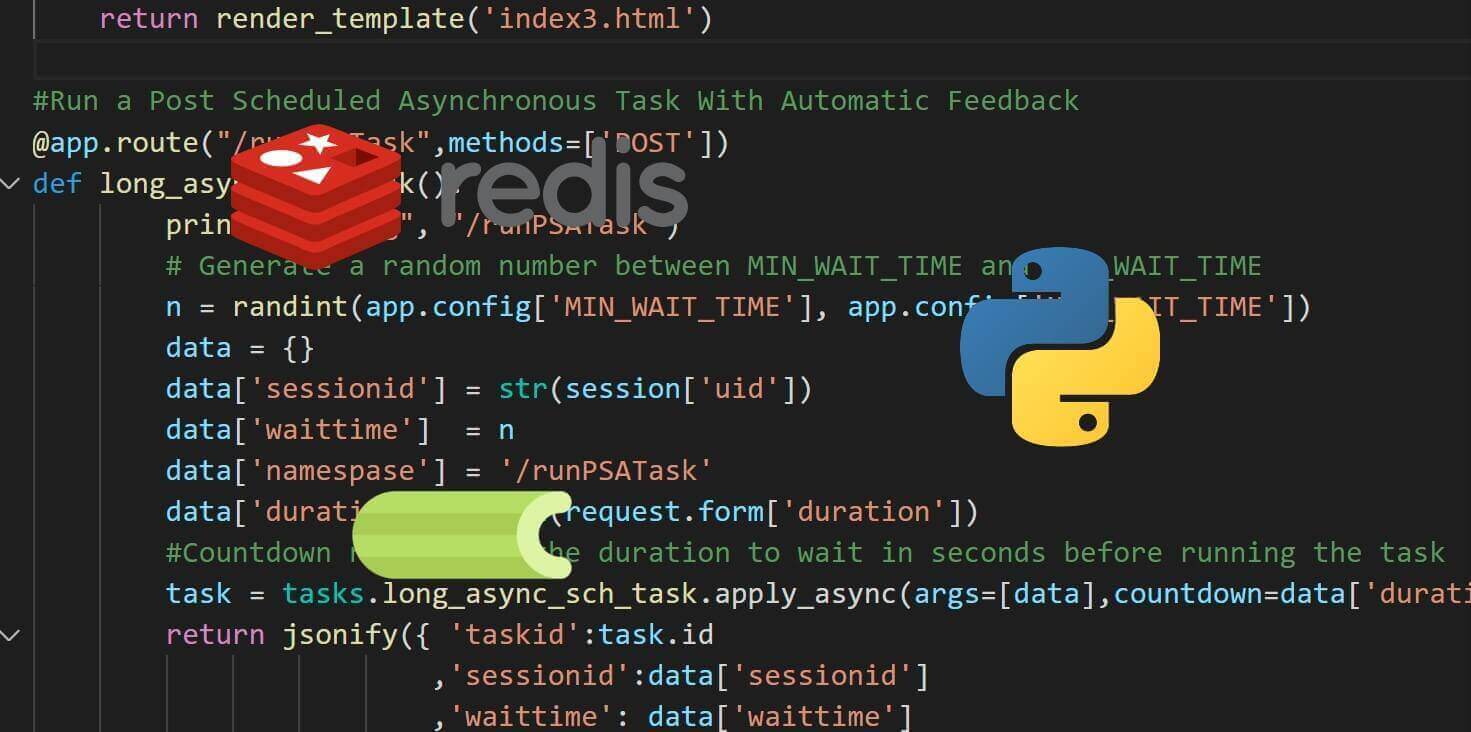

Related: Asynchronous Tasks with Celery in Python.

Multiple Threads

import requests

from concurrent.futures import ThreadPoolExecutor

from time import perf_counter

# number of threads to spawn

n_threads = 5

# read 1024 bytes every time

buffer_size = 1024

def download(url):

# download the body of response by chunk, not immediately

response = requests.get(url, stream=True)

# get the file name

filename = url.split("/")[-1]

with open(filename, "wb") as f:

for data in response.iter_content(buffer_size):

# write data read to the file

f.write(data)

if __name__ == "__main__":

urls = [

"https://cdn.pixabay.com/photo/2018/01/14/23/12/nature-3082832__340.jpg",

"https://cdn.pixabay.com/photo/2013/10/02/23/03/dawn-190055__340.jpg",

"https://cdn.pixabay.com/photo/2016/10/21/14/50/plouzane-1758197__340.jpg",

"https://cdn.pixabay.com/photo/2016/11/29/05/45/astronomy-1867616__340.jpg",

"https://cdn.pixabay.com/photo/2014/07/28/20/39/landscape-404072__340.jpg",

] * 5

t = perf_counter()

with ThreadPoolExecutor(max_workers=n_threads) as pool:

pool.map(download, urls)

print(f"Time took: {perf_counter() - t:.2f}s")The code here is changed a little bit, we are now using ThreadPoolExecutor class from the concurrent.futures package, it basically creates a pool with a number of threads that we specify, and then it handles splitting the urls list across the threads using the pool.map() method.

Here is how long it lasted for me:

Time took: 3.85sThat is about x3.6 faster (at least for me) using 5 threads, try to tune the number of threads to spawn on your machine and see if you can further optimize it.

Now that's not the only way you can create threads, you can use the convenient threading module with a queue as well, here is another equivalent code:

import requests

from threading import Thread

from queue import Queue

# thread-safe queue initialization

q = Queue()

# number of threads to spawn

n_threads = 5

# read 1024 bytes every time

buffer_size = 1024

def download():

global q

while True:

# get the url from the queue

url = q.get()

# download the body of response by chunk, not immediately

response = requests.get(url, stream=True)

# get the file name

filename = url.split("/")[-1]

with open(filename, "wb") as f:

for data in response.iter_content(buffer_size):

# write data read to the file

f.write(data)

# we're done downloading the file

q.task_done()

if __name__ == "__main__":

urls = [

"https://cdn.pixabay.com/photo/2018/01/14/23/12/nature-3082832__340.jpg",

"https://cdn.pixabay.com/photo/2013/10/02/23/03/dawn-190055__340.jpg",

"https://cdn.pixabay.com/photo/2016/10/21/14/50/plouzane-1758197__340.jpg",

"https://cdn.pixabay.com/photo/2016/11/29/05/45/astronomy-1867616__340.jpg",

"https://cdn.pixabay.com/photo/2014/07/28/20/39/landscape-404072__340.jpg",

] * 5

# fill the queue with all the urls

for url in urls:

q.put(url)

# start the threads

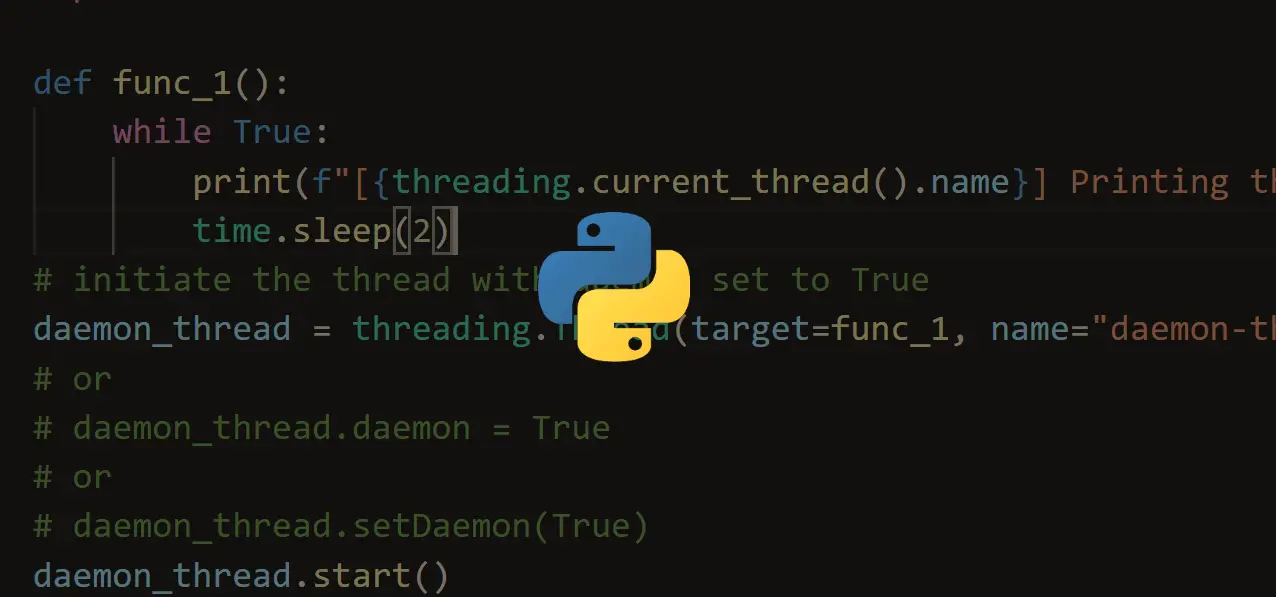

for t in range(n_threads):

worker = Thread(target=download)

# daemon thread means a thread that will end when the main thread ends

worker.daemon = True

worker.start()

# wait until the queue is empty

q.join()This is a good alternative too, we are using a synchronized queue here in which we fill it with all the image URLs we want to download and then spawn the threads manually that each executes download() function.

As you may already saw, the download() function uses an infinite loop, which will never end, I know this is counter-intuitive, but it makes sense when we know that the thread that is executing this function is a daemon thread, which means that it will end as long as the main thread ends.

So we're using the q.put() method to put the item, and q.get() to get that item and consume it (in this case, download it), this is the Producer-consumer problem that is widely discussed in the Computer Science field.

Now, what if two threads execute the q.get() method (or q.put() as well) at the same time, what happens? Well, I said before this queue is thread-safe (synchronized), which means it uses a lock under the hood that prevents two threads to get the item simultaneously.

When we finish downloading that file, we call the q.task_done() method which tells the queue that the processing on the task (on that item) is complete.

Returning to the main thread, we created the threads and started them using the start() method; after that, we need a way to block the main thread until all threads were completed, that is what q.join() exactly does, it blocks until all items in the queue have been gotten and processed.

Conclusion

To conclude, first, you shouldn't use threads if you don't need to speed up your code, maybe you execute it once a month, so you're just gonna add code complexity which can result in difficulties in debugging phase.

Second, if your code is heavily CPU task, you shouldn't use threads either; that's because of the GIL. If you wish to run your code on multi-cores, then you should use the multiprocessing module, which provides similar functionality but with processes instead of threads.

Third, you should only use threads on I/O tasks such as writing to a disk, waiting for a network resource, etc.

Finally, you should use ThreadPoolExecutor when you have the items to process before even consuming them. However, if your items are not pre-defined and are grabbed while the code is executing (as it is generally the case in Web Scraping), then consider using the synchronized queue with threading module.

Here are some tutorials in which we used threads:

- How to Make a Subdomain Scanner in Python

- How to Make a Port Scanner in Python using Socket Library

- How to Write a Keylogger in Python from Scratch

- How to Create Fake Access Points using Scapy in Python

Happy Coding ♥

Just finished the article? Now, boost your next project with our Python Code Generator. Discover a faster, smarter way to code.

View Full Code Auto-Generate My Code

Got a coding query or need some guidance before you comment? Check out this Python Code Assistant for expert advice and handy tips. It's like having a coding tutor right in your fingertips!