Want to code faster? Our Python Code Generator lets you create Python scripts with just a few clicks. Try it now!

Google Drive enables you to store your files in the cloud, which you can access anytime and everywhere in the world. In this tutorial, you will learn how to list your Google drive files, search over them, download stored files, and even upload local files into your drive programmatically using Python.

Here is the table of contents:

- Enable the Drive API

- List Files and Directories

- Upload Files

- Search for Files and Directories

- Download Files

To get started, let's install the required libraries for this tutorial:

pip3 install google-api-python-client google-auth-httplib2 google-auth-oauthlib tabulate requests tqdmEnable the Drive API

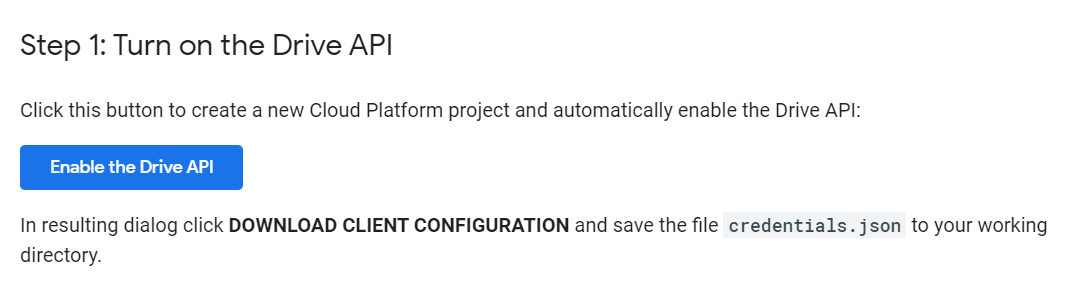

Enabling Google Drive API is very similar to other Google APIs such as Gmail API, YouTube API, or Google Search Engine API. First, you need to have a Google account with Google Drive enabled. Head to this page and click the "Enable the Drive API" button as shown below:

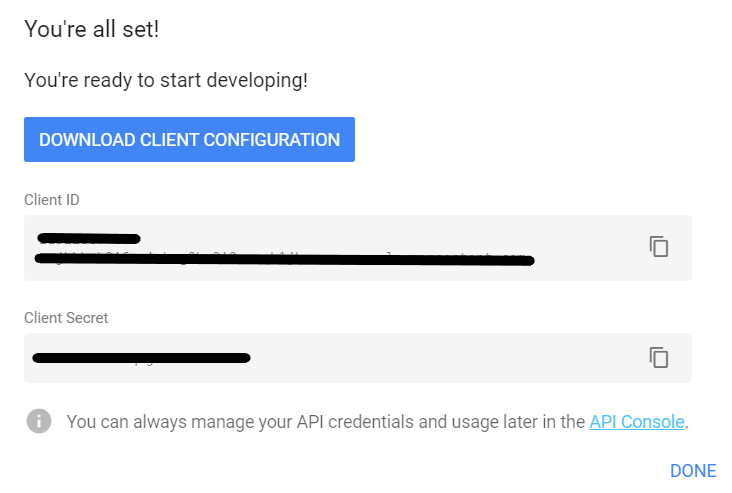

A new window will pop up; choose your type of application. I will stick with the "Desktop app" and then hit the "Create" button. After that, you'll see another window appear saying you're all set:

Download your credentials by clicking the "Download Client Configuration" button and then "Done".

Finally, you need to put credentials.json that is downloaded into your working directories (i.e., where you execute the upcoming Python scripts).

List Files and Directories

Before we do anything, we need to authenticate our code to our Google account. The below function does that:

import pickle

import os

from googleapiclient.discovery import build

from google_auth_oauthlib.flow import InstalledAppFlow

from google.auth.transport.requests import Request

from tabulate import tabulate

# If modifying these scopes, delete the file token.pickle.

SCOPES = ['https://www.googleapis.com/auth/drive.metadata.readonly']

def get_gdrive_service():

creds = None

# The file token.pickle stores the user's access and refresh tokens, and is

# created automatically when the authorization flow completes for the first

# time.

if os.path.exists('token.pickle'):

with open('token.pickle', 'rb') as token:

creds = pickle.load(token)

# If there are no (valid) credentials available, let the user log in.

if not creds or not creds.valid:

if creds and creds.expired and creds.refresh_token:

creds.refresh(Request())

else:

flow = InstalledAppFlow.from_client_secrets_file(

'credentials.json', SCOPES)

creds = flow.run_local_server(port=0)

# Save the credentials for the next run

with open('token.pickle', 'wb') as token:

pickle.dump(creds, token)

# return Google Drive API service

return build('drive', 'v3', credentials=creds)We've imported the necessary modules. The above function was grabbed from the Google Drive quickstart page. It basically looks for token.pickle file to authenticate with your Google account. If it didn't find it, it'd use credentials.json to prompt you for authentication in your browser. After that, it'll initiate the Google Drive API service and return it.

Going to the main function, let's define a function that lists files in our drive:

def main():

"""Shows basic usage of the Drive v3 API.

Prints the names and ids of the first 5 files the user has access to.

"""

service = get_gdrive_service()

# Call the Drive v3 API

results = service.files().list(

pageSize=5, fields="nextPageToken, files(id, name, mimeType, size, parents, modifiedTime)").execute()

# get the results

items = results.get('files', [])

# list all 20 files & folders

list_files(items)So we used service.files().list() function to return the first five files/folders the user has access to by specifying pageSize=5, we passed some useful fields to the fields parameter to get details about the listed files, such as mimeType (type of file), size in bytes, parent directory IDs, and the last modified date time. Check this page to see all other fields.

Notice we used list_files(items) function, we didn't define this function yet. Since results are now a list of dictionaries, it isn't that readable. We pass items to this function to print them in human-readable format:

def list_files(items):

"""given items returned by Google Drive API, prints them in a tabular way"""

if not items:

# empty drive

print('No files found.')

else:

rows = []

for item in items:

# get the File ID

id = item["id"]

# get the name of file

name = item["name"]

try:

# parent directory ID

parents = item["parents"]

except:

# has no parrents

parents = "N/A"

try:

# get the size in nice bytes format (KB, MB, etc.)

size = get_size_format(int(item["size"]))

except:

# not a file, may be a folder

size = "N/A"

# get the Google Drive type of file

mime_type = item["mimeType"]

# get last modified date time

modified_time = item["modifiedTime"]

# append everything to the list

rows.append((id, name, parents, size, mime_type, modified_time))

print("Files:")

# convert to a human readable table

table = tabulate(rows, headers=["ID", "Name", "Parents", "Size", "Type", "Modified Time"])

# print the table

print(table)We converted that list of dictionaries items variable into a list of tuples rows variable, and then pass them to tabulate module we installed earlier to print them in a nice format, let's call main() function:

if __name__ == '__main__':

main()See my output:

Files:

ID Name Parents Size Type Modified Time

--------------------------------- ------------------------------ ----------------------- -------- ---------------------------- ------------------------

1FaD2BVO_ppps2BFm463JzKM-gGcEdWVT some_text.txt ['0AOEK-gp9UUuOUk9RVA'] 31.00B text/plain 2020-05-15T13:22:20.000Z

1vRRRh5OlXpb-vJtphPweCvoh7qYILJYi google-drive-512.png ['0AOEK-gp9UUuOUk9RVA'] 15.62KB image/png 2020-05-14T23:57:18.000Z

1wYY_5Fic8yt8KSy8nnQfjah9EfVRDoIE bbc.zip ['0AOEK-gp9UUuOUk9RVA'] 863.61KB application/x-zip-compressed 2019-08-19T09:52:22.000Z

1FX-KwO6EpCMQg9wtsitQ-JUqYduTWZub Nasdaq 100 Historical Data.csv ['0AOEK-gp9UUuOUk9RVA'] 363.10KB text/csv 2019-05-17T16:00:44.000Z

1shTHGozbqzzy9Rww9IAV5_CCzgPrO30R my_python_code.py ['0AOEK-gp9UUuOUk9RVA'] 1.92MB text/x-python 2019-05-13T14:21:10.000ZThese are the files in my Google Drive. Notice the Size column are scaled in bytes; that's because we used get_size_format() function in list_files() function, here is the code for it:

def get_size_format(b, factor=1024, suffix="B"):

"""

Scale bytes to its proper byte format

e.g:

1253656 => '1.20MB'

1253656678 => '1.17GB'

"""

for unit in ["", "K", "M", "G", "T", "P", "E", "Z"]:

if b < factor:

return f"{b:.2f}{unit}{suffix}"

b /= factor

return f"{b:.2f}Y{suffix}"The above function should be defined before running the main() method. Otherwise, it'll raise an error. For convenience, check the full code.

Remember after you run the script, you'll be prompted in your default browser to select your Google account and permit your application for the scopes you specified earlier, don't worry, this will only happen the first time you run it, and then token.pickle will be saved and will load authentication details from there instead.

Note: Sometimes, you'll encounter a "This application is not validated" warning (since Google didn't verify your app) after choosing your Google account. It's okay to go "Advanced" section and permit the application to your account.

Upload Files

To upload files to our Google Drive, we need to change the SCOPES list we specified earlier, we need to add the permission to add files/folders:

from __future__ import print_function

import pickle

import os.path

from googleapiclient.discovery import build

from google_auth_oauthlib.flow import InstalledAppFlow

from google.auth.transport.requests import Request

from googleapiclient.http import MediaFileUpload

# If modifying these scopes, delete the file token.pickle.

SCOPES = ['https://www.googleapis.com/auth/drive.metadata.readonly',

'https://www.googleapis.com/auth/drive.file']Different scope means different privileges, and you need to delete token.pickle file in your working directory and rerun the code to authenticate with the new scope.

We will use the same get_gdrive_service() function to authenticate our account, let's make a function to create a folder and upload a sample file to it:

def upload_files():

"""

Creates a folder and upload a file to it

"""

# authenticate account

service = get_gdrive_service()

# folder details we want to make

folder_metadata = {

"name": "TestFolder",

"mimeType": "application/vnd.google-apps.folder"

}

# create the folder

file = service.files().create(body=folder_metadata, fields="id").execute()

# get the folder id

folder_id = file.get("id")

print("Folder ID:", folder_id)

# upload a file text file

# first, define file metadata, such as the name and the parent folder ID

file_metadata = {

"name": "test.txt",

"parents": [folder_id]

}

# upload

media = MediaFileUpload("test.txt", resumable=True)

file = service.files().create(body=file_metadata, media_body=media, fields='id').execute()

print("File created, id:", file.get("id"))We used service.files().create() method to create a new folder, we passed the folder_metadata dictionary that has the type and the name of the folder we want to create, we passed fields="id" to retrieve folder id so we can upload a file into that folder.

Next, we used MediaFileUpload class to upload the sample file and pass it to the same service.files().create() method, make sure you have a test file of your choice called test.txt, this time we specified the "parents" attribute in the metadata dictionary, we simply put the folder we just created. Let's run it:

if __name__ == '__main__':

upload_files()After I ran the code, a new folder was created in my Google Drive:

Search for Files and Directories

Google Drive enables us to search for files and directories using the previously used list() method just by passing the 'q' parameter, the below function takes the Drive API service and query and returns filtered items:

def search(service, query):

# search for the file

result = []

page_token = None

while True:

response = service.files().list(q=query,

spaces="drive",

fields="nextPageToken, files(id, name, mimeType)",

pageToken=page_token).execute()

# iterate over filtered files

for file in response.get("files", []):

result.append((file["id"], file["name"], file["mimeType"]))

page_token = response.get('nextPageToken', None)

if not page_token:

# no more files

break

return resultLet's see how to use this function:

def main():

# filter to text files

filetype = "text/plain"

# authenticate Google Drive API

service = get_gdrive_service()

# search for files that has type of text/plain

search_result = search(service, query=f"mimeType='{filetype}'")

# convert to table to print well

table = tabulate(search_result, headers=["ID", "Name", "Type"])

print(table)So we're filtering text/plain files here by using "mimeType='text/plain'" as query parameter, if you want to filter by name instead, you can simply use "name='filename.ext'" as query parameter. See Google Drive API documentation for more detailed information.

Let's execute this:

if __name__ == '__main__':

main()Output:

ID Name Type

--------------------------------- ------------- ----------

15gdpNEYnZ8cvi3PhRjNTvW8mdfix9ojV test.txt text/plain

1FaE2BVO_rnps2BFm463JwPN-gGcDdWVT some_text.txt text/plainCheck the full code here.

Related: How to Use Gmail API in Python.

Download Files

To download files, we need first to get the file we want to download. We can either search for it using the previous code or manually get its drive ID. In this section, we gonna search for the file by name and download it to our local disk:

import pickle

import os

import re

import io

from googleapiclient.discovery import build

from google_auth_oauthlib.flow import InstalledAppFlow

from google.auth.transport.requests import Request

from googleapiclient.http import MediaIoBaseDownload

import requests

from tqdm import tqdm

# If modifying these scopes, delete the file token.pickle.

SCOPES = ['https://www.googleapis.com/auth/drive.metadata',

'https://www.googleapis.com/auth/drive',

'https://www.googleapis.com/auth/drive.file'

]I've added two scopes here. That's because we need to create permission to make files shareable and downloadable. Here is the main function:

def download():

service = get_gdrive_service()

# the name of the file you want to download from Google Drive

filename = "bbc.zip"

# search for the file by name

search_result = search(service, query=f"name='{filename}'")

# get the GDrive ID of the file

file_id = search_result[0][0]

# make it shareable

service.permissions().create(body={"role": "reader", "type": "anyone"}, fileId=file_id).execute()

# download file

download_file_from_google_drive(file_id, filename)You saw the first three lines in previous recipes. We simply authenticate with our Google account and search for the desired file to download.

After that, we extract the file ID and create new permission that will allow us to download the file, and this is the same as creating a shareable link button in the Google Drive web interface.

Finally, we use our defined download_file_from_google_drive() function to download the file, there you have it:

def download_file_from_google_drive(id, destination):

def get_confirm_token(response):

for key, value in response.cookies.items():

if key.startswith('download_warning'):

return value

return None

def save_response_content(response, destination):

CHUNK_SIZE = 32768

# get the file size from Content-length response header

file_size = int(response.headers.get("Content-Length", 0))

# extract Content disposition from response headers

content_disposition = response.headers.get("content-disposition")

# parse filename

filename = re.findall("filename=\"(.+)\"", content_disposition)[0]

print("[+] File size:", file_size)

print("[+] File name:", filename)

progress = tqdm(response.iter_content(CHUNK_SIZE), f"Downloading {filename}", total=file_size, unit="Byte", unit_scale=True, unit_divisor=1024)

with open(destination, "wb") as f:

for chunk in progress:

if chunk: # filter out keep-alive new chunks

f.write(chunk)

# update the progress bar

progress.update(len(chunk))

progress.close()

# base URL for download

URL = "https://docs.google.com/uc?export=download"

# init a HTTP session

session = requests.Session()

# make a request

response = session.get(URL, params = {'id': id}, stream=True)

print("[+] Downloading", response.url)

# get confirmation token

token = get_confirm_token(response)

if token:

params = {'id': id, 'confirm':token}

response = session.get(URL, params=params, stream=True)

# download to disk

save_response_content(response, destination) I've grabbed a part of the above code from downloading files tutorial; it is simply making a GET request to the target URL we constructed by passing the file ID as params in session.get() method.

I've used the tqdm library to print a progress bar to see when it'll finish, which will become handy for large files. Let's execute it:

if __name__ == '__main__':

download()This will search for the bbc.zip file, download it and save it in your working directory. Check the full code.

Conclusion

Alright, there you have it. These are basically the core functionalities of Google Drive. Now you know how to do them in Python without manual mouse clicks!

Remember, whenever you change the SCOPES list, you need to delete token.pickle file to authenticate to your account again with the new scopes. See this page for further information, along with a list of scopes and their explanations.

Feel free to edit the code to accept file names as parameters to download or upload them. Go and try to make the script as dynamic as possible by introducing argparse module to make some useful scripts. Let's see what you build!

Below is a list of other Google APIs tutorials, if you want to check them out:

- How to Extract Google Trends Data in Python.

- How to Use Google Custom Search Engine API in Python.

- How to Extract YouTube Data using YouTube API in Python.

- How to Use Gmail API in Python.

Happy Coding ♥

Loved the article? You'll love our Code Converter even more! It's your secret weapon for effortless coding. Give it a whirl!

View Full Code Convert My Code

Got a coding query or need some guidance before you comment? Check out this Python Code Assistant for expert advice and handy tips. It's like having a coding tutor right in your fingertips!